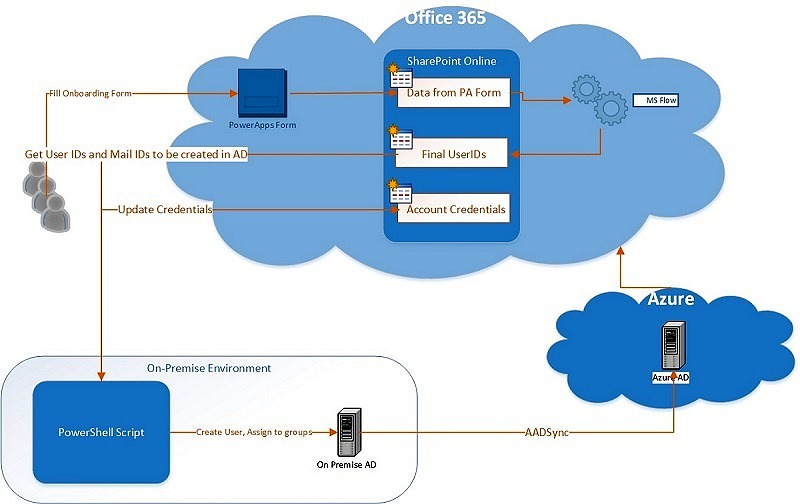

In one of my previous articles, I talked about How to use Azure Text Analytics Service to Automatically Tag SharePoint Documents. One of the alternatives for implementation I pointed out was using Office 365 Management Activity API to identify when a document gets uploaded and trigger the metadata tagging.

In this article, I am going to go in a bit more detail about how that can be achieved. However, by the end of this article, it should be fairly clear to you that similar solution can also implement various different scenarios to automate SharePoint governance.

Introduction

Summarizing the introduction from msdn, Office 365 Management Activity APIs can be used to retrieve information about user, admin, system, and policy actions and events from Office 365 and Azure AD activity logs. The API is a REST web service and relies on Azure AD and the OAuth2 protocol for authentication and authorization. To access the API We’ll need to first register it in Azure AD and configure it with appropriate permissions. This will enable the application to request the OAuth2 access tokens it needs to call the API.

The Office 365 Management Activity API aggregates actions and events into tenant-specific content blobs, which are classified by the type and source of the content they contain. We’ll be focusing only of one content type “Audit.SharePoint” in our case.

Surprisingly, this powerful API doesn’t provide direct access to granular events. i.e. We’ll get all events from SharePoint (like file accessed, uploaded, permission changed etc.) and we’ll have to loop through and filter out the events based on which we want to take any action. Not so efficient Microsoft! I was hoping this would get added but seems like there has not been much focus on these APIs for past few months.

Solution Alternatives

There are a few ways in which we can process data received from the API.

Attach a webhook

The webhook gets notified when a content blob containing SharePoint events is ready. This sound efficient, but based on my experience, I have not found it very helpful. Mainly because not having granular access to events. For even a medium size organization, it generates a lot of notifications (containing multiple events) which takes hours to filter and process. This completely kills the purpose of notifications. A few points about how this works:

- Office 365 Management Activity API complies a batch (content blob) of say 50 events

- Sends a notification to the webhook. The notification just contains the information that “some” SharePoint related events have occured. It provides a contentURI where we need to look for “what” events have occured.

- We need to add these Content URIs to a Azure Queue to be processed later

- A Web Job reads entries from that queue, reads the actual events from that content URI, loops through them to find the events we are interested in and then takes the action when such events are found.

Webhooks are being de-emphasized by Microsoft because of the difficulty in debugging and troubleshooting.

Run as a scheduled job

The major problem I found with previous approach is that during a normal day of work when users are using SharePoint, it generates hundreds of notifications. Each may be containing thousands of events, so practically our web job runs continuously just to find the event we are interested in to take action and the queue becomes really long if web job can’t process fast enough.

I personally think, until Microsoft provides the feature of generating webhook notifications based on granular events (like file uploaded, file deleted etc.), I would rather save the efforts (and related costs) of using Webhook, Azure Queue and Web Job 🙂 So, the alternative, just have a console application (could still run as web job though), running every 30-60 mins and check the SharePoint related events, loop to find out the event we are interested in and take action (Tag the document in our case).

So, in this article, we are going to focus on the second approach mentioned above.

Register Application in Azure AD

As mentioned above, Office 365 Management Activity API is a REST web service and relies on Azure AD and the OAuth2 protocol for authentication and authorization, so we need to first register it in Azure AD.

Create Application

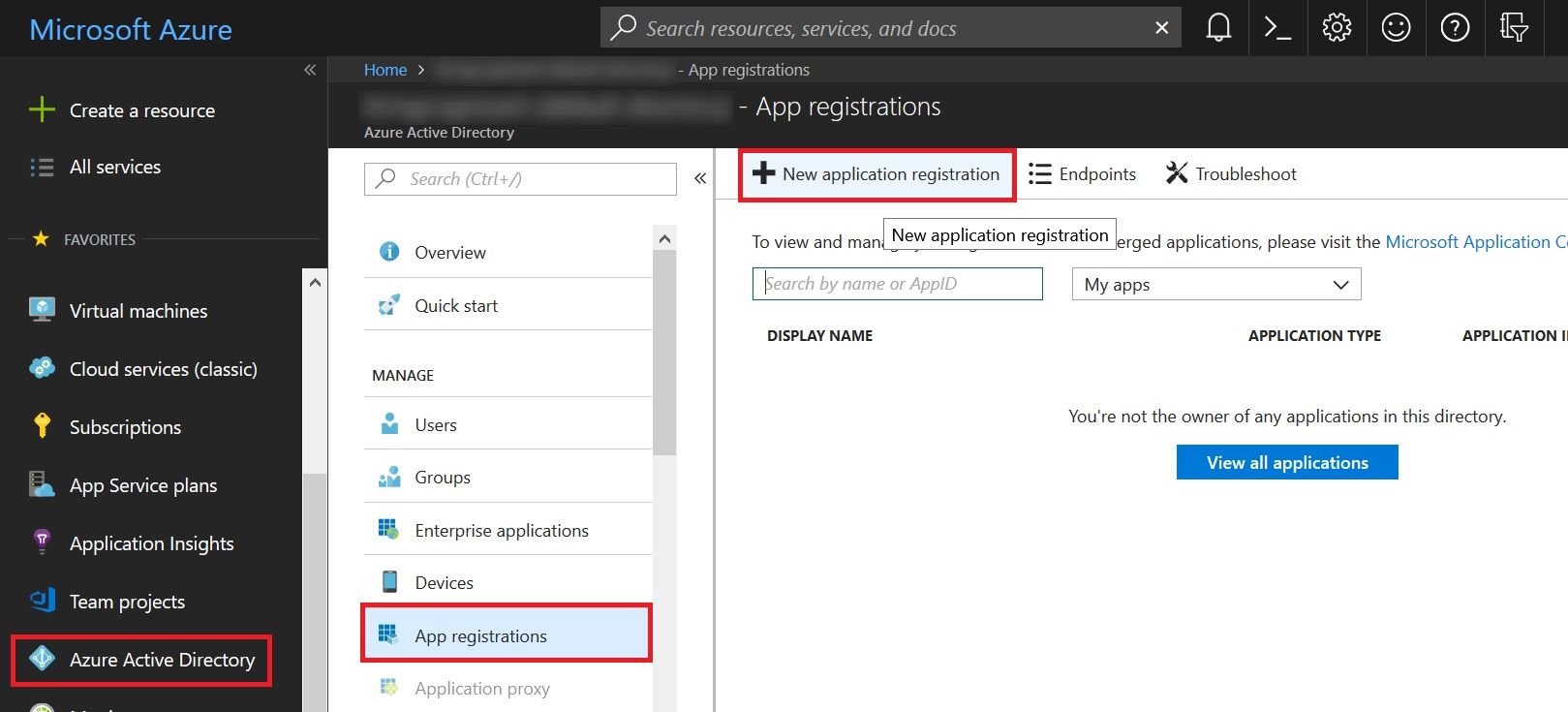

Go to portal.azure.com, click on Azure Active Directory. Select App Registration and click on New Application Registration.

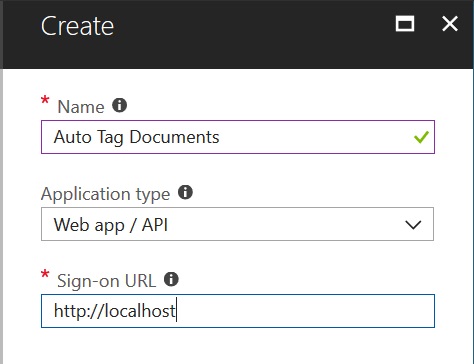

Fill up the form,

- Give name something like “Auto Tag Documents”

- Application Type as “Web app/API”

- Sign-on URL as “http://localhost”. Since this is a web API call, we’ll not be using the sign-on URL anyway, so you can give any value.

and click Create.

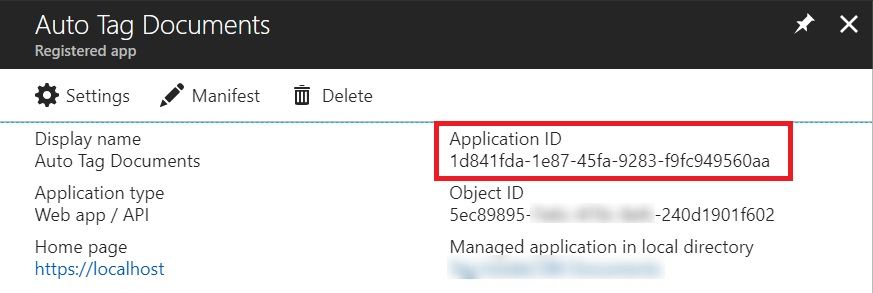

Get Client ID

After the Application get created, note down the Application ID. This will become our Client ID, that we’ll use to get the OAuth token later.

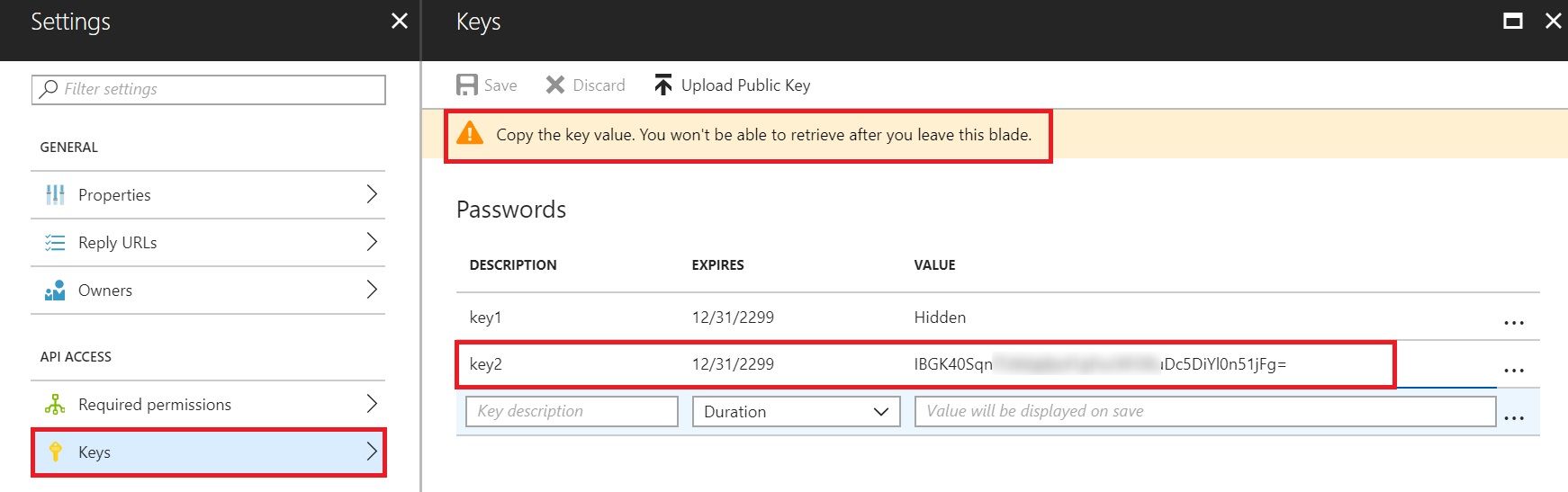

Generate Client Secret

Let’s now generate a Client Secret which in combination with Client ID and Tenant ID will give us the Access Token to call the API.

Click on the Settings of the Application and then in the settings pane, click on Keys. Fill in the name of the key, duration of the key from the drop down and save. The generated key will be displayed after the save. Copy the generated key and save it somewhere in a notepad.

Assign Permissions

Now that the application has been registered and we have noted down all the required values, lets just give the application required permissions to be able to call the API and read the Audit data.

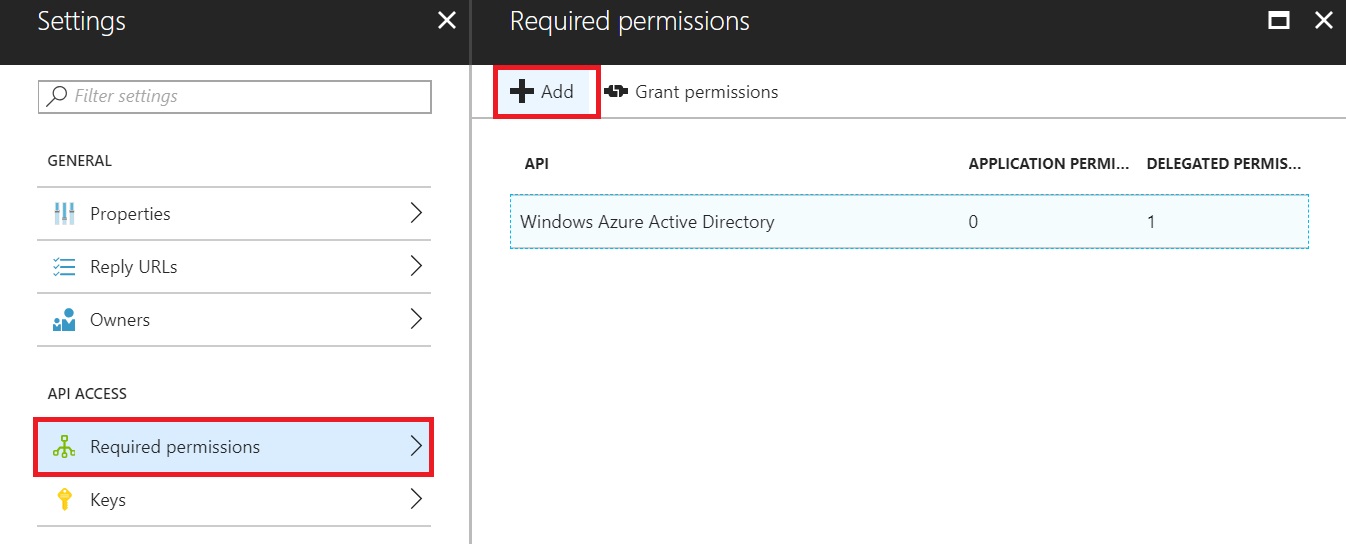

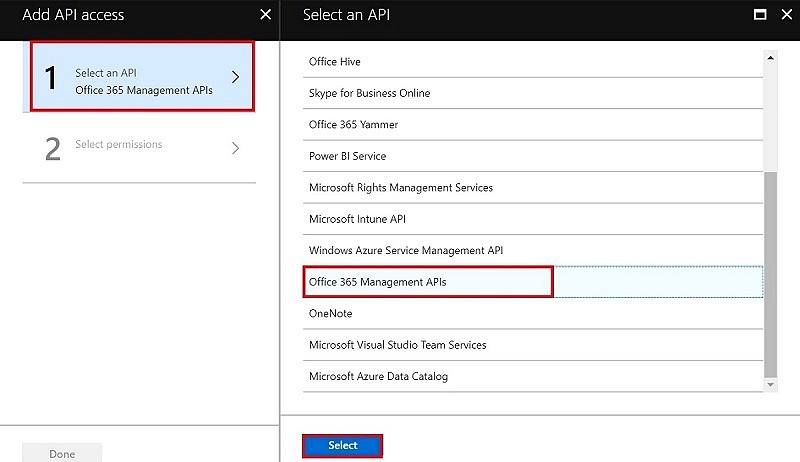

Go to Application Settings and Select “Required Permissions” under API Access. Click on “Add”.

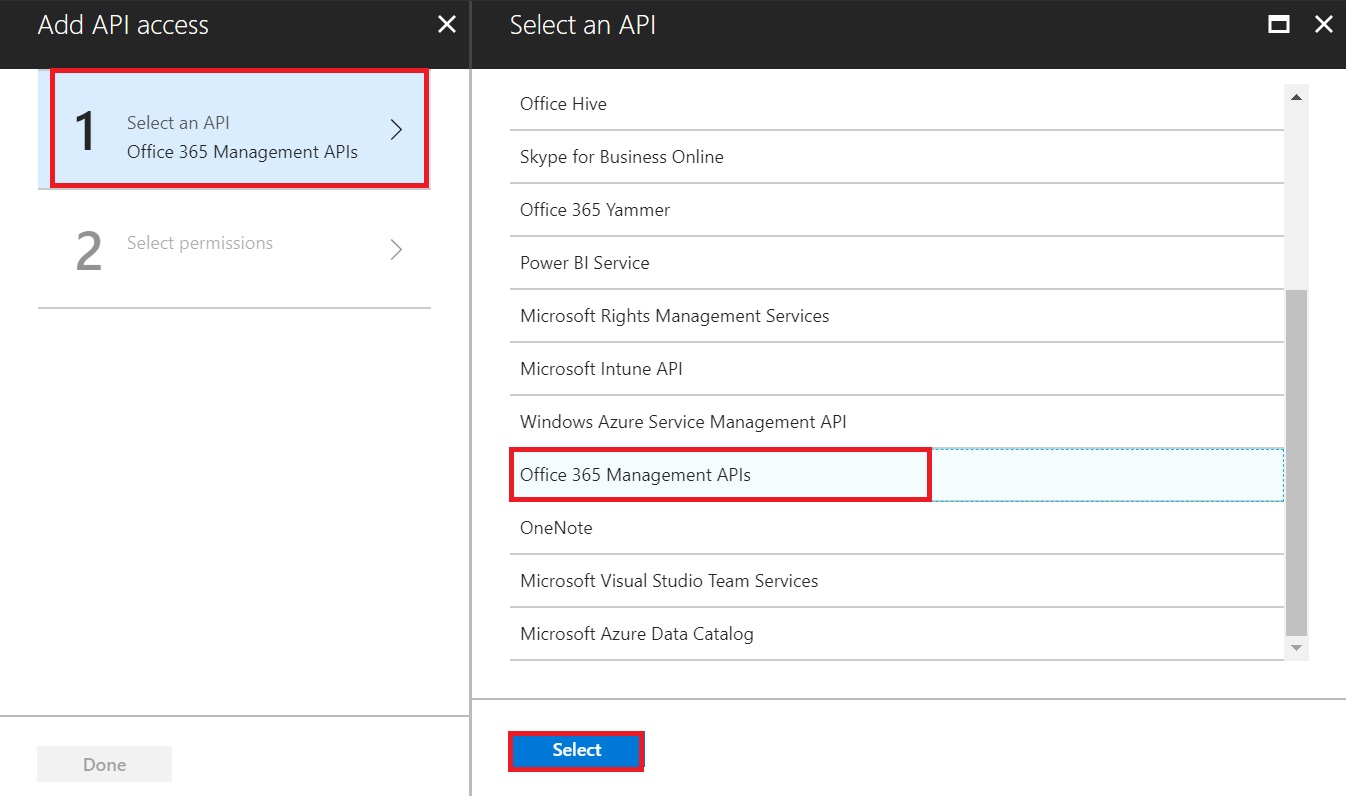

A new Add API Access pane will open. Click on “Select an API”, select “Office 365 Management API” and click on “Select”.

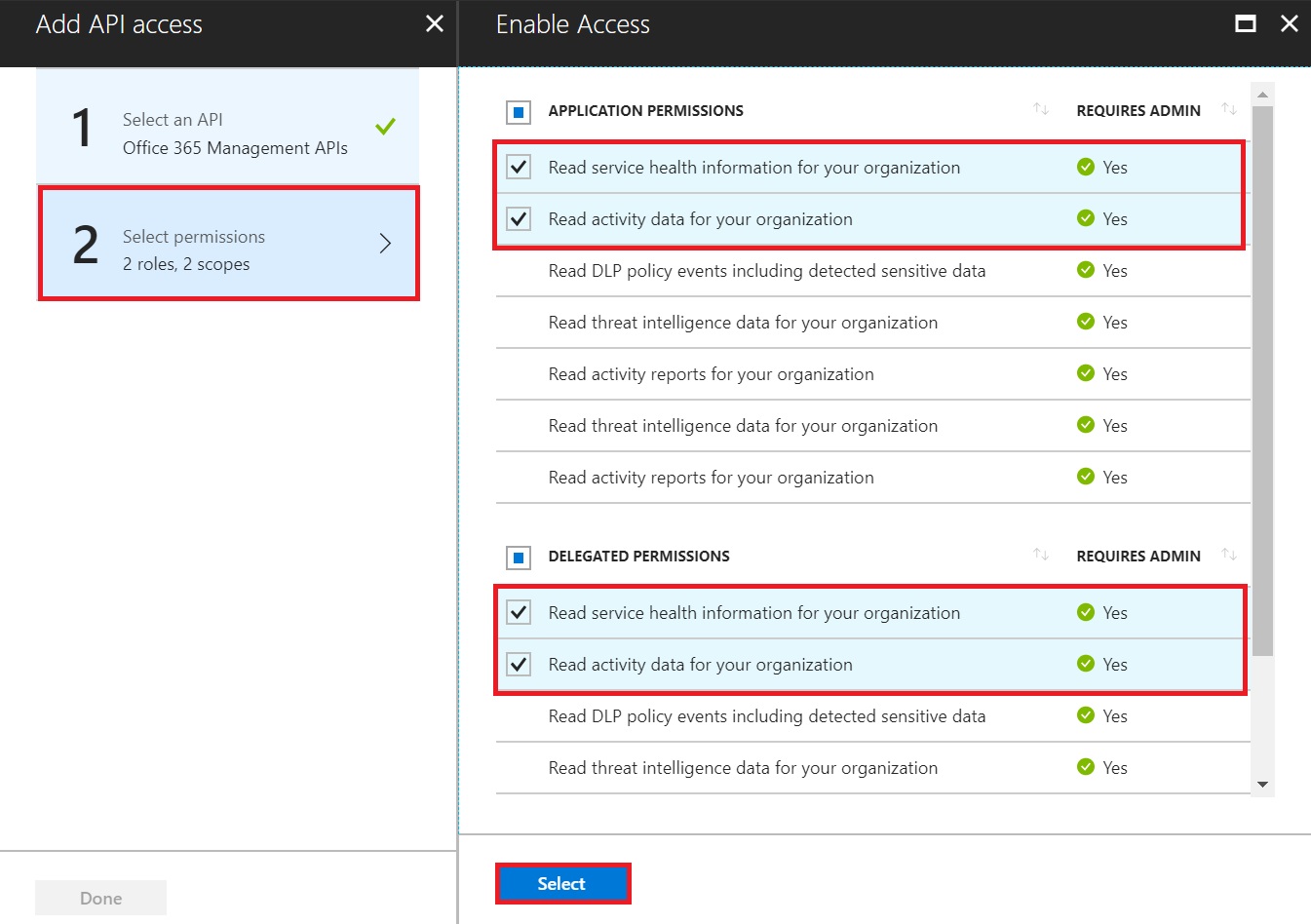

Another screen will open to select the required permissions. Select the following and click Select

- Under Application Permissions

- Read Service Health Information for your organization

- Read Activity Data for your organization

- Under Delegated Permissions

- Read Service Health Information for your organization

- Read Activity Data for your organization

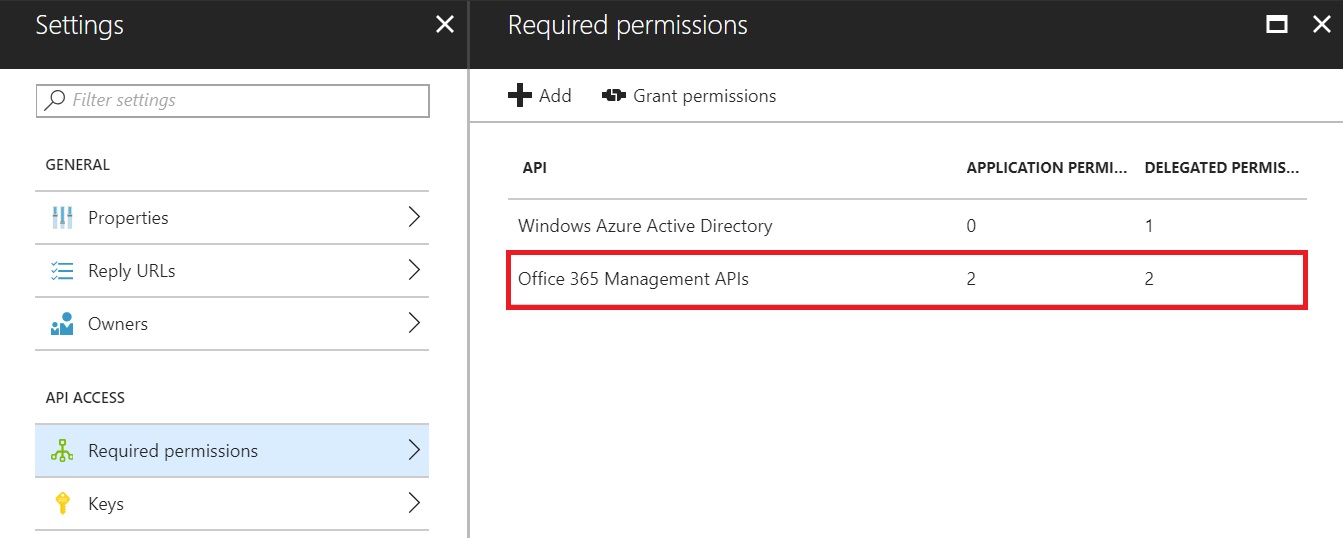

Once done, this is how the Required Permissions page would look like.

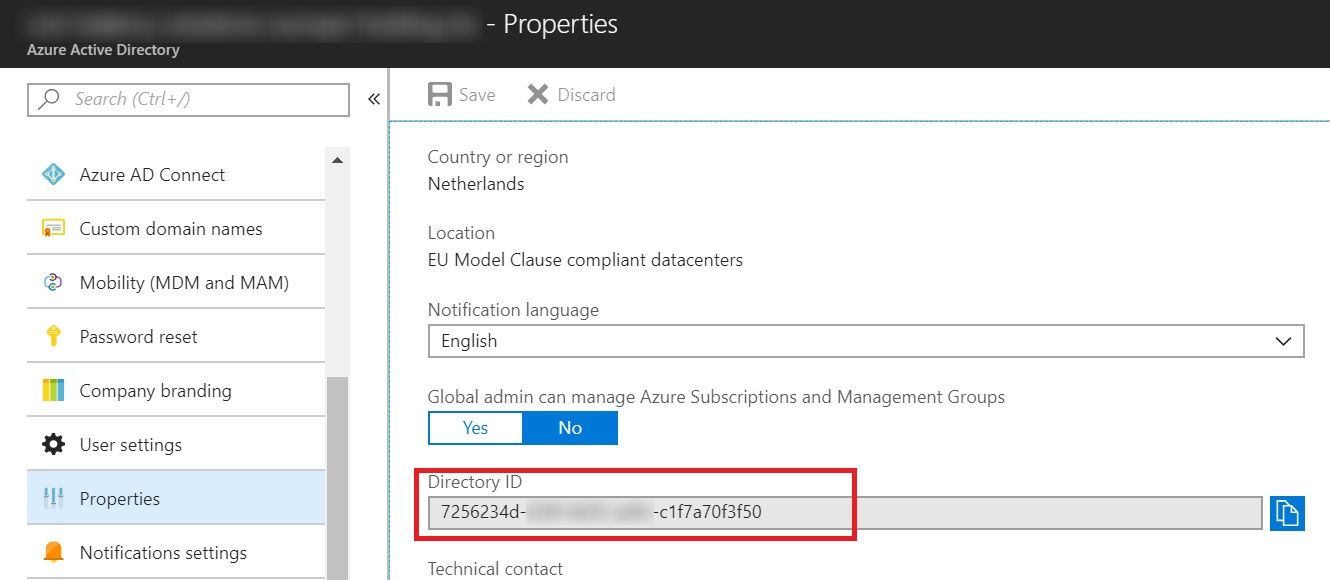

Get Tenant ID

There are various ways to find out your tenant ID but since we are already in Azure AD, the easiest is to get it from the properties. Just scroll down the left pane and click on Properties. Now scroll down the right pane and copy the value under Directory ID.

At this point, our application has been registered for the API call.

Office 365 Admin Consent

Even though the official msdn article about this topic mentions “This step is not required when using the APIs to access data from your own tenant“, it did not work for me until I completed this step. So, I have added it here.

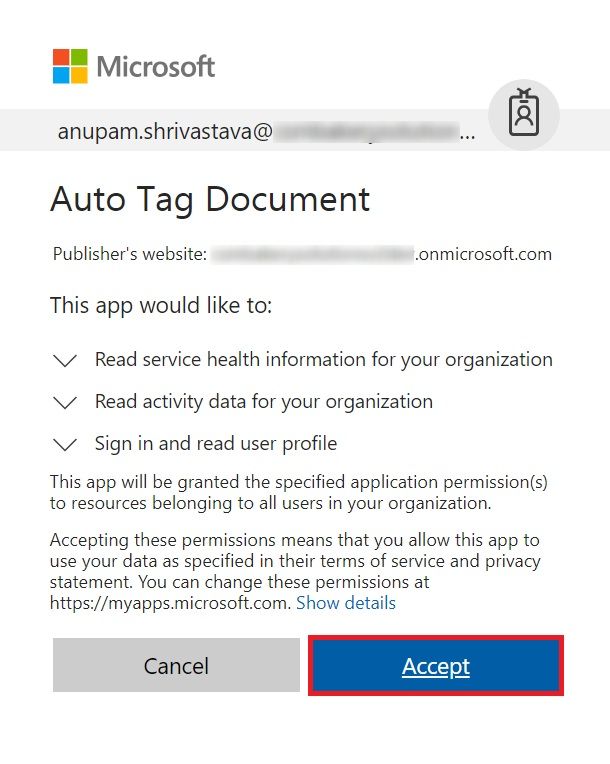

Now that the application has been registered with required permissions, a tenant admin will have to explicitly grant these permissions to allow access of their tenant’s data by using the APIs.

Login as Tenant Admin and just browse the URL in this format:

https://login.windows.net/common/oauth2/authorize?response_type=code&resource=https%3A%2F%2Fmanage.office.com&client_id={client_id}&redirect_uri={redirect_url }

So, in our case, we’ll use the client ID from “Get Client ID” step and redirect URI as http://localhost, so the URL to browse will be

https://login.windows.net/common/oauth2/authorize?response_type=code&resource=https%3A%2F%2Fmanage.office.com&client_id=1d841fda-1e87-45fa-9283-f9fc949560aa&redirect_uri=http://localhost

It should open a screen similar to as shown below which lists all the permissions being requested.

Click Accept when this screen is shown. The application is now ready (almost) to call the Office 365 Management Activity APIs.

Pls. note, since we are not using a valid redirect URI, after the permission is granted by the O365 tenant admin, we’ll get a page cannot be found when it tries to load http://localhost. But don’t worry, if Accept was clicked, the the required consent has been given.

Create Subscription

As mentioned in the article here, To begin retrieving content blobs for a tenant, we first need to create subscription to the desired content types, Audit.SharePoint in our case here. After the subscription is created

- We can either poll regularly to discover new content blobs that are available for download

- Or we can register a webhook endpoint with the subscription and it will send notifications to this endpoint as new content blobs are available.

As discussed earlier, we are going to focus on polling based solution.

Lets go ahead and create the subscription.

We will need to add the following nuget packages to make this code work

- Microsoft.IdentityModel.Clients.ActiveDirectory, version 2.28.0.

If you use higher version, you may need to change the code below a bit to make async call to AcquireToken.

- Newtonsoft.Json, version 11.0.2

- References to Microsoft.SharePoint.Client and Microsoft.SharePoint.Client.Runtime version 16.0.0.0

We’ll need the Client ID, Client Secret and Tenant ID which we had captured earlier.

[code]

public void CreateSubscription(string clientID, string clientSecret, string tenantID)

{

ClientCredential cred = new ClientCredential(clientID, clientSecret);

AuthenticationContext ctx = new AuthenticationContext(“https://login.windows.net/” + tenantID);

string resourceUri = “https://manage.office.com”;

AuthenticationResult res = ctx.AcquireToken(resourceUri, cred);

HttpWebRequest req = HttpWebRequest.Create(“https://manage.office.com/api/v1.0/” + tenantID + “/activity/feed/subscriptions/start?contentType=Audit.SharePoint”) as HttpWebRequest;

req.Headers.Add(“Authorization”, “Bearer ” + res.AccessToken);

req.ContentType = “application/json”;

req.Method = “POST”;

using (var streamWriter = new StreamWriter(req.GetRequestStream()))

{

streamWriter.Flush();

streamWriter.Close();

}

HttpWebResponse response = (HttpWebResponse)req.GetResponse();

Stream dataStream = response.GetResponseStream();

StreamReader reader = new StreamReader(dataStream);

string responseFromServer = reader.ReadToEnd();

}

[/code]

Once this function has been executed, it may take upto 24 hours before you start getting the results from the Management API, so be patient.

Verify Subscription

You can check if the subscription was created successfully by using the function below

[code]

public void GETSubscription(string clientID, string clientSecret, string tenantID)

{

ClientCredential cred = new ClientCredential(clientID, clientSecret);

AuthenticationContext ctx = new AuthenticationContext(“https://login.windows.net/” + tenantID);

string resourceUri = “https://manage.office.com”;

AuthenticationResult res = ctx.AcquireToken(resourceUri, cred);

HttpWebRequest req = HttpWebRequest.Create(“https://manage.office.com/api/v1.0/” + tenantID + “/activity/feed/subscriptions/content?contentType=Audit.SharePoint”) as HttpWebRequest;

req.Headers.Add(“Authorization”, “Bearer ” + res.AccessToken);

req.ContentType = “application/json”;

req.Method = “GET”;

HttpWebResponse response = (HttpWebResponse)req.GetResponse();

Stream dataStream = response.GetResponseStream();

StreamReader reader = new StreamReader(dataStream);

string responseFromServer = reader.ReadToEnd();

}

[/code]

Get Content Blobs

Once the subscription is in place, we can start polling the API to find if there are any content blobs are there.

[code]

public void ListContent(string clientID, string clientSecret, string tenantID)

{

ClientCredential cred = new ClientCredential(clientID, clientSecret);

AuthenticationContext ctx = new AuthenticationContext(“https://login.windows.net/” + tenantID);

string resourceUri = “https://manage.office.com”;

AuthenticationResult res = ctx.AcquireToken(resourceUri, cred);

//Passing hardcoded start time and end time here, you can pass those as a variable.

//Just store the last run time of this script in a text file/registry key and use that as start time

HttpWebRequest req = HttpWebRequest.Create(“https://manage.office.com/api/v1.0/” + tenantID + “/activity/feed/subscriptions/content?contentType=Audit.SharePoint&startTime=2018-05-30T06:35:00Z&endTime=2018-05-30T23:35:00Z”) as HttpWebRequest;

req.Headers.Add(“Authorization”, “Bearer ” + res.AccessToken);

req.ContentType = “application/json”;

req.Method = “GET”;

HttpWebResponse response = (HttpWebResponse)req.GetResponse();

Stream dataStream = response.GetResponseStream();

StreamReader reader = new StreamReader(dataStream);

string responseFromServer = reader.ReadToEnd();

//Getting all notifications as JArray

JArray notifications = JArray.Parse(responseFromServer.ToString());

var startTime = DateTime.Now;

Console.WriteLine(“Total Notifications: ” + notifications.Count + ” === Start Time: ” + startTime);

//Since the sequence of notifications doesn’t matter, we can execute them in parallel for better performance

Parallel.For(0, notifications.Count, delegate (int i)

{

try

{

//Function to read the actual events from the content blob, location of which comes as contentUri

ReadContent(clientID, clientSecret, tenantID, notifications[i][“contentUri”].ToString());

}

catch { }

});

var endTime = DateTime.Now;

Console.WriteLine(” === End Time: ” + DateTime.Now);

Console.WriteLine(“Time Taken: ” + (endTime – startTime).ToString());

Console.ReadKey();

}

[/code]

As you can see above, we are getting a list of all the content blobs and then looping through them to read the content from the ContentURI.

The content blob comes in this format

[code]

{{ “contentUri”: “https://manage.office.com/api/v1.0/7256234d-62f4-4e95-ad9c-c1f7a70f3f50/activity/feed/audit/20180530064751527012228$20180530064751527012228$audit_sharepoint$Audit_SharePoint”, “contentId”: “20180530064751527012228$20180530064751527012228$audit_sharepoint$Audit_SharePoint”, “contentType”: “Audit.SharePoint”, “contentCreated”: “2018-05-30T06:47:51.527Z”, “contentExpiration”: “2018-06-06T06:47:51.527Z”}}

[/code]

We will get the value of contentUri from here, which is the location of the blob to get the list of actual events.

Get Events

The ReadContent() function above actually reads the list of events from that contentUri.

[code]

public void ReadContent(string clientID, string clientSecret, string tenantID, string contenturi)

{

ClientCredential cred = new ClientCredential(clientID, clientSecret);

AuthenticationContext ctx = new AuthenticationContext(“https://login.windows.net/” + tenantID);

string resourceUri = “https://manage.office.com”;

AuthenticationResult res = ctx.AcquireToken(resourceUri, cred);

//Read the Events from the contentUri

HttpWebRequest req = HttpWebRequest.Create(contenturi) as HttpWebRequest;

req.Headers.Add(“Authorization”, “Bearer ” + res.AccessToken);

req.ContentType = “application/json”;

req.Method = “GET”;

HttpWebResponse response = (HttpWebResponse)req.GetResponse();

Stream dataStream = response.GetResponseStream();

StreamReader reader = new StreamReader(dataStream);

string responseFromServer = reader.ReadToEnd();

JArray auditLogEvents = JArray.Parse(responseFromServer.ToString());

Console.WriteLine(“Total Audit Log Events: ” + auditLogEvents.Count);

//Just show the list of events. You can find the events like “FileUploaded” and then trigger the Metadata Update

for (int i = 0; i < auditLogEvents.Count; i++)

{

if ((auditLogEvents[i]).SelectToken(“Operation”).ToString() == “FileUploaded”)

{

Console.WriteLine(“Site URL:” + (auditLogEvents[i]).SelectToken(“SiteUrl”));

Console.WriteLine(“Object ID:” + (auditLogEvents[i]).SelectToken(“ObjectId”));

Console.WriteLine(“Creation Time:” + (auditLogEvents[i]).SelectToken(“CreationTime”));

}

}

}

[/code]

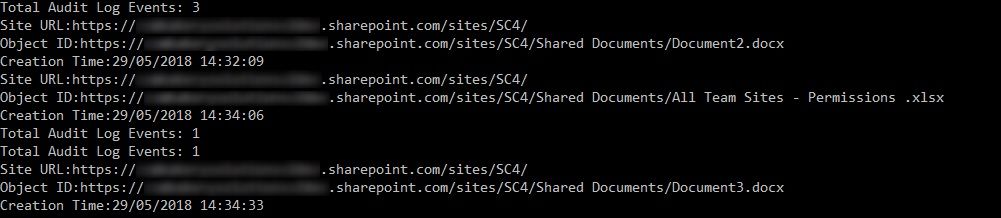

I am just showing the list of events under each blob here with if condition to find events like “FileUploaded” to trigger the metadata update as explained in this article.

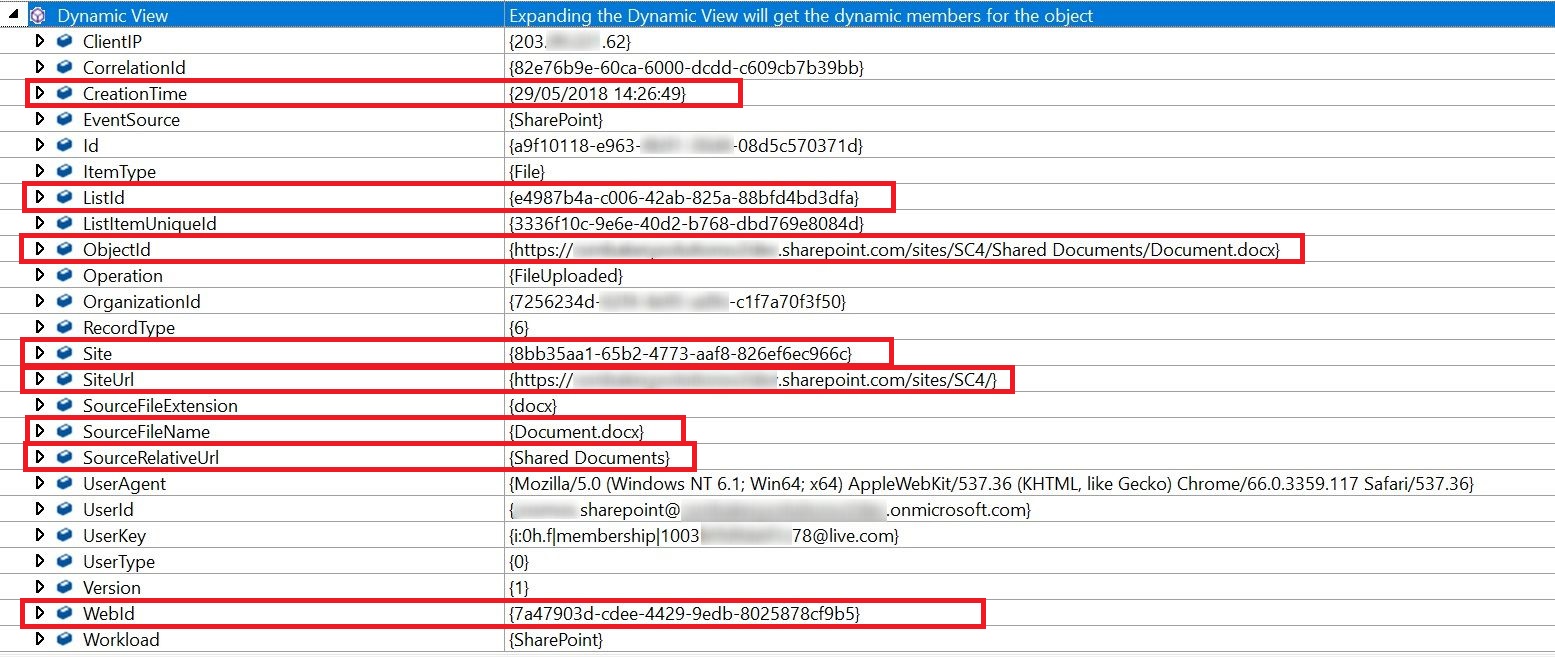

Look at the values which we get as part of schema. We can fetch all of these by using the field names under SelectToken in code above.

See the output of above function

Now, you can extract and pass the required values like SiteURL, Document Library URL etc. and pass those to identify documents for metadata tagging as explained in this article.

As you can guess, this can be used to identify various other operations being done in SharePoint environment like documents download, check-in/check-out, permission changes etc. You can check out all the supported events here under “Enum: SharePointAuditOperation – Type: Edm.Int32” heading.

All those events can be identified and an appropriate automated action can be taken as required by the defined governance. i.e. If someone is added in a SharePoint group meant for Owners/Admin, we can send a mail to his/her manager or even remove that user directly from the group, if required. You got the idea 🙂

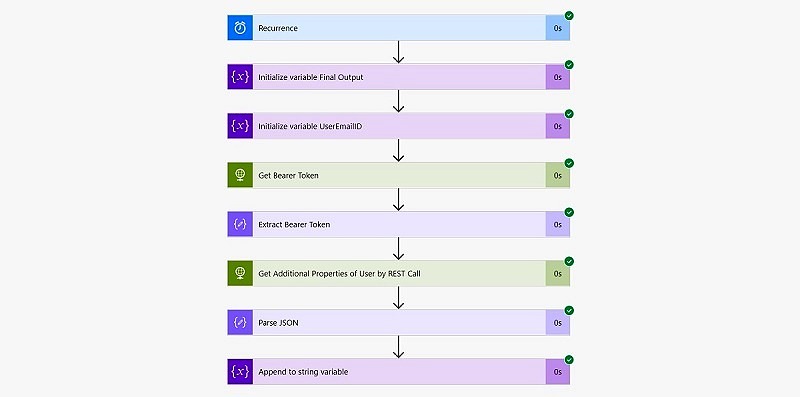

We can call these API from Microsoft Flow as well to save some coding efforts. I will write a separate article detailing that out 🙂

Conslusion

Office 365 Management Activity API is a powerful feature but somehow, I feel it’s less utilized currently. For me, not having access to granular events is a big reason for that. However, it can still be used to automate a lot of governance related tasks which would otherwise require manual observations and interventions.

Hope this helps.

Enjoy,

Anupam

12 comments

Thank you for the great article Anupam, this is exactly what I was looking for–though I want to do it as you mentioned at the end with MS Flow

Great article Anupam I just have a quick question. I received 403 forbidden when i attempted to check if the subscription was created. just wanted to make sure that’s due to having to wait up to 24 hours and not something i messed up. Thanks.

If the CreateSubscription code was executed successfully then it should be fine… Just wait and let me know if that still doesn’t work.

While getting/checking the subscription, i am getting 400- bad request error. and it has been more than 24hours that we have created the subscription.

You can try executing Create Subscription Function again. If a subscription was already created, it should throw some exception. I have seen subscriptions taking even upto 72 hours. Also, if you have copied and pasted the entire code from this article, try to remove and retype the single and double quotes. Sometimes, these quotes are pasted as different characters resulting in such errors.

Hi anupam, thanks for this article, i am getting content blobs out of order. Do you know how can i get ordered content blobs?

Thanks

You can try using the new service communication API – https://docs.microsoft.com/en-us/office/office-365-management-api/office-365-service-communications-api-reference. See under “Get Messages” section.

We are getting below error when starting Audit.SharePoint subscription.

“error”: {

“code”: “StartSubscription [TenantId=,ContentType=Audit.SharePoint,ApplicationId=,PublisherId=] failed. Exception”,

“message”: “Microsoft.Office.Compliance.Audit.DataServiceException: Tenant does not exist.\r\n at Microsoft.Office.Compliance.Audit.API.AzureManager.GetSubscriptionTableClientForTenant(Guid tenantID, Boolean throwIfTenantNull)\r\n at Microsoft.Office.Compliance.Audit.API.AzureManager.d__22.MoveNext()\r\n— End of stack trace from previous location where exception was thrown —\r\n at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()\r\n at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)\r\n at Microsoft.Office.Compliance.Audit.API.StartController.d__0.MoveNext()”

}

We checked the permissions of registered app in Azure Active Directory. Checked the Tenant ID, Client ID, Secret key of test tenancy .Checked if Audit recording is turned on in the Security and Compliance Admin. Checked the authentication token generated. Everything seems to be fine.

Can you help me resolve this issue? also can you confirm if this requires paid Azure subscription or it works with the default azure associated with EMS Office 365 E3 license.

I’m getting “The remote server returned an error: (400) Bad Request.” in CreateSubscription(). I have cross checked and ClientID, ClientSecret and TenantID are all correct. InnerException is null

Are you able to get the Access Token successfully at this like AuthenticationResult res = ctx.AcquireToken(resourceUri, cred)? Also, try to replace the double quotes by removing and manually retyping them… I have seen similar issues with double quotes from copied from web sites…

Thank you for the excellent post.

I’m able to use this endpoint: activity/feed/subscriptions/content?contentType=Audit.AzureActiveDirectory. But then I’m stuck at using Powershell to get the content blobs. Currently it said that the blob key in the url is not valid. Do you have any idea how to fix it?

Thank you alot again!

Are you stuck at this line?

HttpWebRequest req = HttpWebRequest.Create("https://manage.office.com/api/v1.0/" + tenantID + "/activity/feed/subscriptions/content?contentType=Audit.SharePoint&startTime=2018-05-30T06:35:00Z&endTime=2018-05-30T23:35:00Z") as HttpWebRequest;

Are you able to verify your subscription successfully?